The impact of auto-compactionĬouchbase is an append-only database and while this makes it always consistent and avoids data corruption, having an ever increasing file will eventually eat up all the available disk. Since the ratio between these operations is highly application specific the ratio will also look different. This shows that the more nodes you have the less efficient you are in the way you’re spending money. Pr - the price of an instance is in British Pounds per hour for a FMCI 16.192. Since Couchbase has a very interesting scaling pattern as some metrics decrease and some increase as we add servers, we assigned a score to each of the three metrics we had (GET test duration, PUT test duration, QUERY average response time) and then we added them.

We have also calculated the performance-to-price ratio. Query runs on the other hand take a lot longer to execute so we don’t need the same sub-ms analysis. Again, we had to use the same sample result time from our custom sampler and also removed outliners. This is how a GET run looks like analysed in Octave. This allowed us to analyse the real response times in Octave. How a PUT RUN looks likeĪs JMeter does not support out of the box resolutions smaller than 1 millisecond we had to adjust our custom sampler to calculate and export the duration using System.nanoTime(). Also, since each operation takes more than 100 ms, the network latency impact is no longer visible. Query performance on the other hand seems to increase linearly as we add servers, possibly due to map/reduce operations being highly parallel in nature. Our explanation for this is that each additional compute instance introduces additional network latency. of InstancesĪs can be seen, the higher the number of compute instances, the higher the duration of GET and PUT operations, so lower performance overall. We have also waited until the cluster has settled before starting the tests. We have also made sure that the view was setup and published every time after a bucket has been re-created. We used a combination of dstat, HTop, IOtop and Couchbase’s own UI to monitor all the hosts including the loaders. No hardware limitation was hit during the tests. This test is designed to verify cluster behavior under normal operating circumstances so the cluster was monitored permanently. The view was also setup before running any other test. To test the Query performance we used the following map/reduce view: Mapper function The time series was then analysed using Octave. The data was aggregated from all loaders and saved in CSVs. The Couchbase clients discover the nodes participating in the cluster and connect to the individual nodes directly thus yielding more than 2000 concurrent connections to the cluster.Īll the hosts had the fs.file-max ulimit increased to 55k. Also, the auto-compaction feature was disabled.Īll the tests were executed using 1000 concurrent client threads that instantiate a separate client instance on each loader machine, which totals 2000 concurrent threads. The bucket that held the data was always deleted and recreated before running the tests.

The nodes were added sequentially to the pool in increments of 2. The dataset is the Last.fm training dataset which has about one million JSON formatted records of songs. The custom sampler instantiates the Couchbase client and then uses it to execute independent PUT, GET and then QUERY operations.

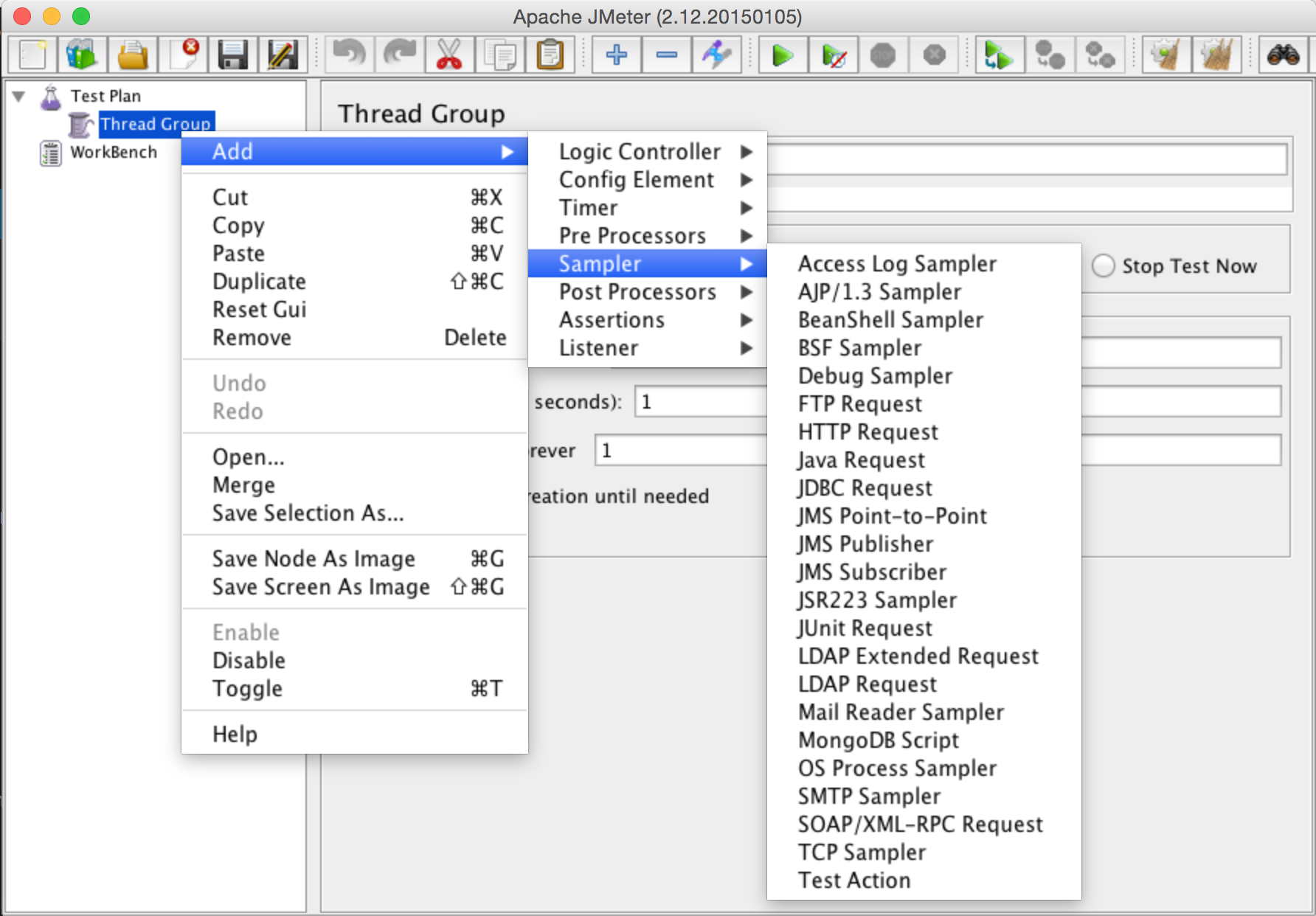

The loader software was Apache JMeter 2.11 with custom samplers written by us that are available at our github repository. Also the nodes were connected to our Solid Store iSCSI Block Storage via a third independent 10 Gbps link per node. These nodes were connected with two independent 10 Gbps networks, one for the actual loading and inter-node communication and the other one for backend inter-loader communication.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed